Projects

- ArchiSpace: Space architecture in analogue environments

- Robotic Terrain Mapping

- Discretized and Circular Design

- CV- and HRI-supported Planting

- Lunar Architecture and Infrastructure

- Robotic Drawing

- Rhizome 2.0: Scaling-up of Rhizome 1.0

- Rietveld Chair Reinvented

- Cyber-physical Furniture

- Rhizome 1.0: Rhizomatic off-Earth Habitat

- D2RP for Bio-Cyber-Physical Planetoids

- Circular Wood for the Neighborhood

- Computer Vision and Human-Robot Interaction for D2RA

- Cyber-physical Architecture

- Hybrid Componentiality

- 100 Years Bauhaus Pavilion

- Variable Stiffness

- Scalable Porosity

- Robotically Driven Construction of Buildings

- Kite-powered Design-to-Robotic-Production

- Robotic(s in) Architecture

- F2F Continuum and E-Archidoct

- Space-Customizer

Cyber-physical Architecture

Year: 2016-2022

Project leader: Henriette Bier

Project team: Henriette Bier, et al.

Collaborators / Partners: Politecnico Milano, Cornell

Dissemination: Spool CpA 2 (2019), Spool CpA 3 (2020)

The integration of cyber-physical systems into physically built environments requires integration of D2RP with D2RO processes. This implies that architecture is fitted with sensor-actuators serving environmental control and functional adaptation. Such sensor-actuators connecting the human with the built environment via embedded and wearable wireless sensor-actuator networks, facilitate spatial and sensorial reconfiguration.

Several experiments were implemented, some involving envelopes as interface responding to both users and environmental conditions in order to provide shading and views by opening and closing up on demand.

Others involved the design of interactive urban furniture, which required analysis of the site and possible activities on chosen location. By mapping movement and activities, an urban landscape is formed facilitating activities such as running, walking, seating.

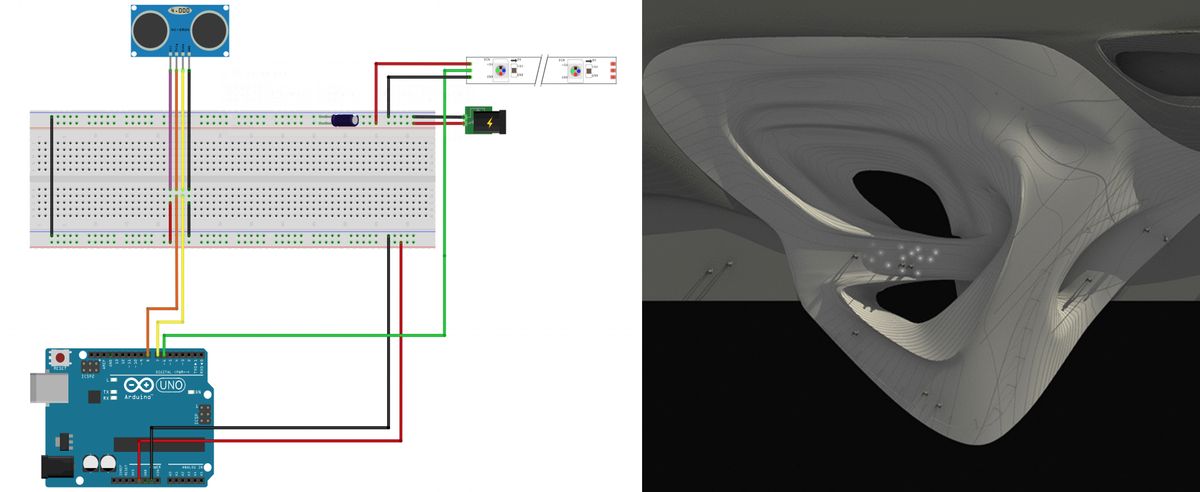

Integrated interactive lighting engages playfully with humans by following or preceding them and has been developed using Arduino and sensor-actuators. Integrated lights change intensity, color and on/off pattern depending on presence.

Integrating smart environmental control into buildings requires mapping of the human body in movement. The intelligent distributed control is then designed by projecting requirements for climate control onto the envelope. The integration of sensor-actuators with the structure requires consideration in terms of material systems that come together. Furthermore, intelligent control requires Machine Learning (ML) in order to process data from movement, touch, temperature, humidity, etc. sensors in order to change light intensity and color, ventilation, etc. In this context, actuation is based on predictions and decisions made with help of ML, which is embedded into a routine decision tree that allows the system to learn and improve in time. A detailed scenario planning is usually carried out, which is then implemented technically by using Arduino, Processing, Kinect and webcam coupled with camera vision to detect objects and humans.

In another implementation, which is an interactive stage, D2RP is expressed via function-specialized components, while D2RO is practically an extended Ambient Intelligence (AmI) enabled by a Cyber-Physical System (CPS). This CPS is built on a heterogeneous Wireless Sensor and Actuator Network (WSAN) whose nodes can respond to varying computational needs. Two principal and innovative functionalities are demonstrated in this implementation: (1) cost-effective yet robust Human Activity Recognition (HAR) via Support Vector Machine (SVM) and k-Nearest Neighbor (k-NN) classification models, and (2) appropriate corresponding reactions that promote the occupant's spatial experience and well-being via continuous regulation of illumination with respect to colors and intensities to correspond to engaged activities (Liu Cheng et al., 2018).